How VR became a key to unlocking animal behaviour

When you think about virtual reality, you probably think about who it’s for: gamers, lovers of tech, or people with a desire to make the outside world disappear. Or maybe you think about that headset, with its sweaty interior and ease of inducing motion sickness. Isn’t it a niche product only used for entertainment?

And yet, were you to look beyond the headset, you will find that the market for creating an ultra-realistic immersive world has given rise to a thriving offshoot that’s far removed from the glare of game arcades. For the last two decades, biologists have been using VR as a tool to reveal fundamental principles about the neuronal circuitry underpinning behaviour in animals. And in Konstanz, behavioural biologists are joining forces with computer scientists to push the limits of this technology to gain insights into decision-making in animal collectives that were previously inaccessible.

Virtual reality is a game changer for the study of animal collective behaviour,

says Iain Couzin, biologist at the University of Konstanz and a director at the Max Planck Institute of Animal Behavior, where he leads the Department of Collective Behaviour. He is also co-director at the University of Konstanz' s Cluster of Excellence "Centre for the Advanced Study of Collective Behaviour".

What we call “VR” is technically defined as an immersive environment where the sensory organs (such as visual or auditory) of the user are artificially stimulated to alter the perception of reality. While we usually think of people using VR, animals can also be placed in virtual environments. But in place of a headset, the animal’s whole body is within the space.

With VR being so commercialised for humans, it’s hard for people to think past the headset and to imagine instead an animal immersed in a realistic and dynamic, yet synthetic world,

says Hemal Naik, a PhD student working with Couzin in the Max Planck Department of Collective Behaviour and Nassir Navab at the Technical University of Munich.

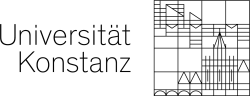

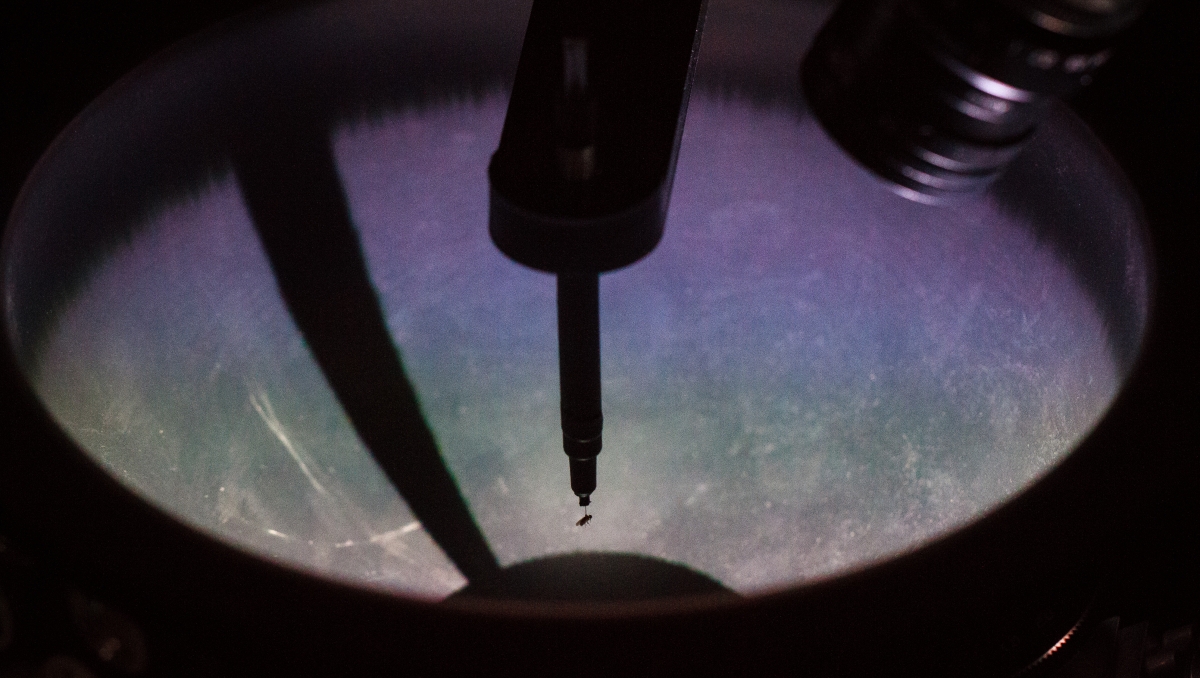

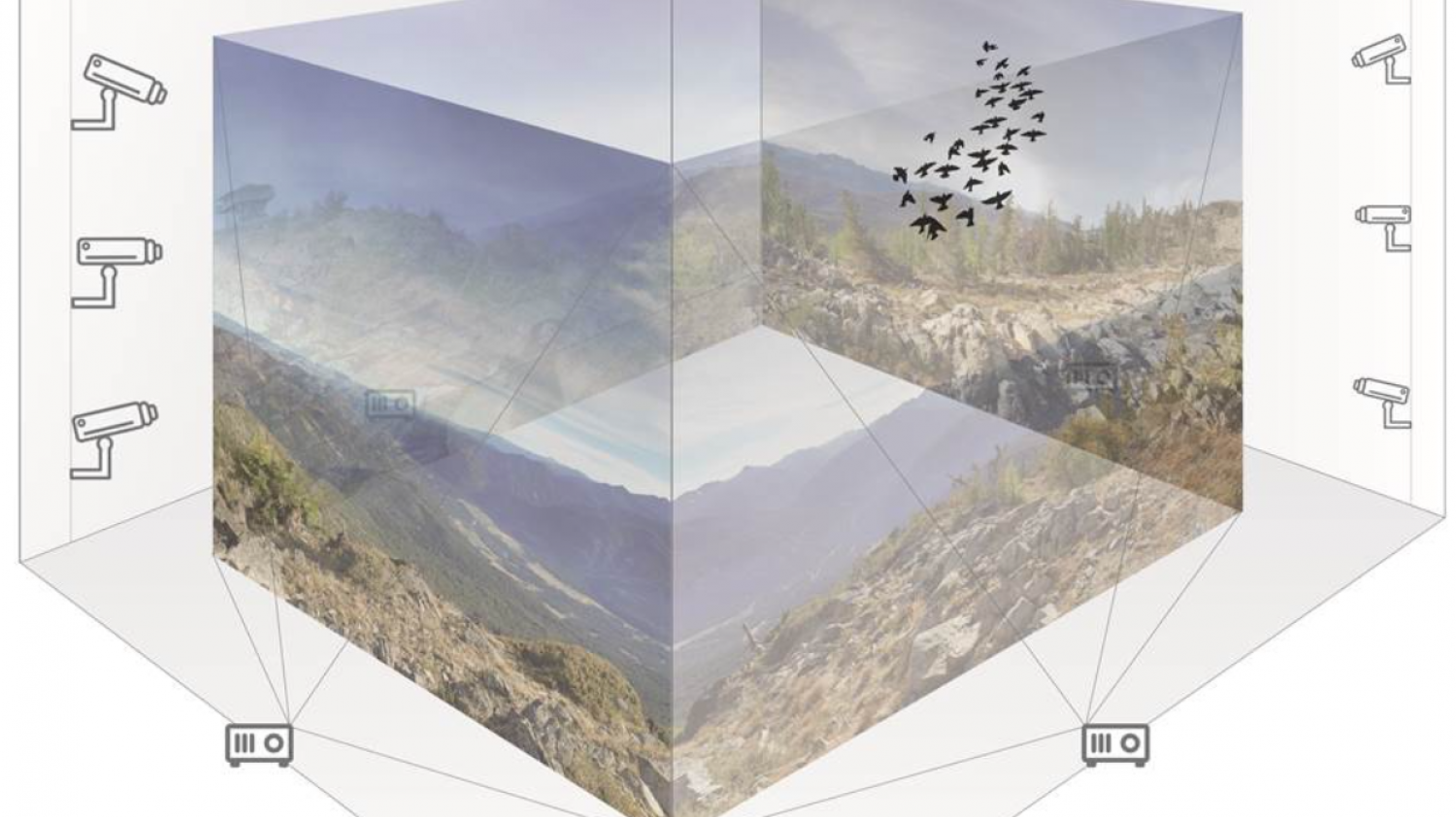

Iain Couzin’s group are studying how flies make perceptual decisions using VR. The image displays tethered VR setup (Hybrid Design), where the fly is fixed to one spot and only allowed to rotate. The movement of the fly is tracked with high-resolution cameras and visualizations are updated in realtime using projectors. Such setups can be used for studying sensory-motor responses, flight behavior or decision making etc. Image by Simon Gingins for the Couzin Lab.Multiple VR systems can be plugged together to create conditions for social behaviour in a virtual manner. In such a virtual social scenario, currently used in Iain Couzin’s research, each animal may interact with a group of virtual conspecifics which are projections of real animals from other VR systems. Image: Cluster of Excellence "Centre for the Advanced Study of Collective Behaviour".

Naik and his collaborators recently wrote a paper “Animals in Virtual Environments” for the journal IEEE TVCG that he will be presenting on March 25 at the annual IEEE VR conference, widely acknowledged as the premier venue for VR researchers to deliver new findings. After the coronavirus was declared a pandemic, the conference has switched to an all-online format and, as you would expect from a community of VR scientists, the organisers are offering the first ever virtual platform for a conference.

But the conference will offer another first: Naik’s presentation will be the first time an animal study has been included in the programme. “So far, the VR industry has been all about the human user, so the idea of putting animals into virtual worlds might sound crazy to most industry experts,” he says.

https://www.youtube.com/watch?v=JFOag4gUTdc

So why would you put animals into virtual worlds? To answer this, it’s important to understand the powerful technique of artificial stimulation for studying behaviour. Artificial stimuli can reliably elicit behaviours in animals during experiments, thereby providing a deeper understanding of the decision-making of animals. In a landmark study from 1950, for example, Niko Tinbergen presented cardboard models of birds to gull chicks and showed that the babies reacted to the models as they would to their parents. So instead of being constrained by what nature can deliver and when, experimenters can tweak properties of the artificial stimuli and plan the timing of delivery to systematically test behaviours.

For decades after Tinbergen, scientists continued to use physical models in experiments, later expanding to video recordings with the discovery that animals could see images played on screens. But soon, they ran into problems. Video playback has a major limitation: it does not react to the animal viewing it. Yet in the real world, action and reaction are intricately linked. If a spider acts aggressively, the spider observing it should also respond. So for biologists to turn digital stimuli into something closer to reality, they had to link action and reaction. “They had to close the loop,” says Naik.

The moment when animal experiments broke into true VR territory, in the early 2000s, was when technology was capable of simulating the real world in two important ways: the animal could see the world from an egocentric perspective and, crucially, that world reacted in real time.

Initially, the problem was solved by keeping the animal still in space. Insects and rodents were presented with virtual stimuli—such as lines that moved according to the animal’s movement—while walking on a treadmill so the perspective remained correct. These early “closed loop” VR experiments ushered in a new generation of studies to investigate neuronal circuit dynamics during behaviour. Navigation in virtual mazes allowed scientists to pinpoint circuits that underlie cognition, learning, and memory. “These were the big money questions for animal VR at the time,” says Katie Conen, a neuroscientist in Couzin’s department who uses VR to examine how schooling fish integrate social and non-social information.

https://www.youtube.com/watch?v=tBNHPk-Lnkk

But just as an anamorphic illusion appears correct from a single vantage point and distorted from anywhere else, these experiments only worked by artificially restricting movement. Creating a real-world environment, however, required a combination of realistic stimuli and naturalistic movement. “That automatically means freely moving VR,” says John Stowers, an engineer for the company loopbio that makes virtual reality platforms for animals.

In 2017, Stowers published a study, which included Couzin and was led by Andrew Straw from University of Freiburg and Kristin Tessmar-Raible from University of Vienna, to deliver the first generalised VR solution for freely moving animals. In the FreemoVR system, individual animals are embedded in a photorealistic synthetic world in which they can interact with virtual organisms, or inspect and move around virtual obstacles, just as they do in the real world. Graphics are projected into the volume to create a virtual world in full 3D with depth cues. To ensure the illusion is preserved, the animal’s movement is tracked and the graphics are updated accordingly.

In his research programme in Konstanz, Couzin has applied this platform to study fish, locusts, and flies, and in doing so has begun to decipher the pathways of communication in animal collectives.

Not only is this hard to observe directly, it is practically impossible without VR,

says Couzin

The advantages that VR brings to studying collective behaviour are twofold. First, it allows for quickly running different experimental scenarios. If a researcher wants to know how fish adjust their behaviour to the orientation of the school, they would, in the old days, have had to observe the school for as long as it took until it changed direction.

“Sometimes that could take days,” says Daniel Calovi, a postdoctoral researcher from the Cluster of Excellence “Centre for the Advanced Study of Collective Behaviour” who is using VR to study how visual stimuli control behaviour in fish. But in a virtual environment, the hologram of the fish school could be programmed to change direction at the touch of a button. “You could run this experiment as many times as you need in a day,” he says.

Second, VR addresses a major bottleneck in the study of collective behaviour, what Couzin calls “the curse of causality.” The complexity of social feedbacks in animal groups makes it extremely difficult to infer the causality—who is influencing whom—in processes like decision making. But with VR, it is possible to systematically explore all the conditions of interest. “Virtual reality offers a means of controlling causality,” says Couzin.

One way is to decouple elements that are normally coupled, such as making an animal that looks dominant act like a subordinate. “Usually, it’s hard to separate how an animal looks from how it behaves, but with VR we can control these variables in precise ways that is not possible in the real world,” says Couzin.

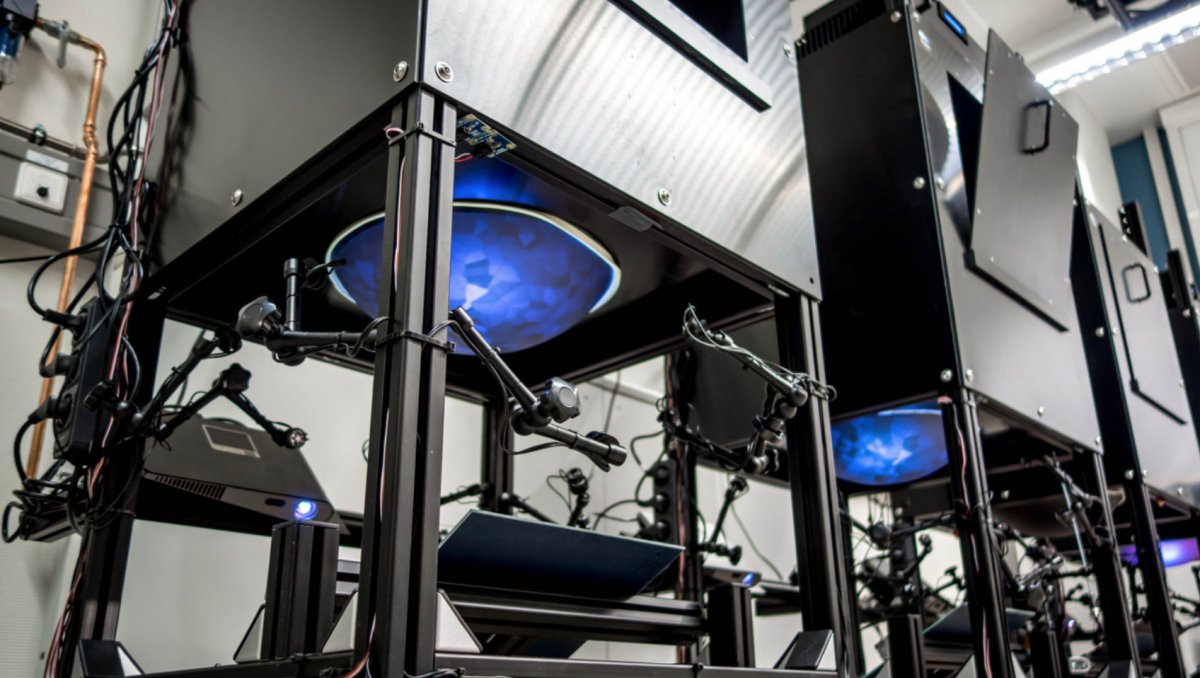

Work in Iain Couzin’s group and in other projects in the Cluster of Excellence "Centre for the Advanced Study of Collective Behaviour" employ bowl-shaped virtual reality systems for fish. Here, the movement of the freely moving fish is tracked by a multi-camera setup and the visualizations on the bowl, in this case 3D shapes, are displayed by projecting perspective corrected images from the bottom of the bowl. Image: lab of Andrew Straw, University of Freiburg.

If VR is the art of calculated deception, then the magician behind the sleight-of-hand is computer graphics—in particular, the powerful graphics cards and fast algorithms born from the booming video game industry. “From what the computer graphics industry can achieve for humans, we are close to perfect,” says Oliver Deussen, a professor of computer graphics at the University of Konstanz and co-director in the Cluster of Excellence “Centre for the Advanced Study of Collective Behaviour”. “Combined with some cool projection systems you can really start fooling humans about reality.”

But of course, it’s not enough to design a realistic virtual world for us. The animals must perceive it to be real. Considering solely vision, many animal species show a range of properties that differ from our own. For instance, the human visual system merges a stream of images into a continuous percept when presented with a refresh rate of at least 30 images per second, whereas this happens at 200 images per second in insects.

“Almost every part of a VR system is designed for humans,” says Stephan Streuber, who develops VR tools for the study of human collectives at the Cluster of Excellence “Centre for the Advanced Study of Collective Behaviour”. “If we are to push animal VR to the next level, we should first understand animal perception and take that into account when designing VR systems.”

Extending the value of VR beyond a small handful of well-characterised animals will require a radical rethinking of current hardware and software solutions.

We are at a point now when coordinated action between biologists, computer scientists and engineers is needed to transform the sophisticated tech we have to fit different species,

says Naik

In Konstanz, this transformation is already taking place. A forthcoming facility, known as the Imaging Hangar, will offer unprecedented infrastructure for the design of novel experiments in collective behaviour involving virtual environments. The research space, housed within a purpose-built research building for visual computing of collectives at the University of Konstanz, will be a place where computer scientists and biologists work together to develop dedicated hardware for display, sensing, and real-time processing for extending VR studies to new species. “This exceptional facility will allow biologists to collaborate with domain experts in computer vision, computer graphics, and perception and latency, to design the next generation of VR solutions,” says Couzin.

The Imaging Hangar, a 15mx15mx8m, temperature-controlled room for studying animal and human collectives, will be completed in 2021. State-of-the-art camera and projection systems will create a virtual environment that is both immersive and reactive. A bird, for example, can fly in the space with "holograms" of virtual conspecifics that react to the real bird and vice versa. Image: University of Konstanz.

The insights gleaned from using VR to unlock the mechanisms behind collective behaviour have far reaching benefits. In the field of robotics, for example, such insights might help engineers in the development of technologies that can be applied to problems demanding more effective and efficient solutions, such as self-organizing robots, self-navigating drones, and micro-robots for disaster relief.

In the shorter term, the work being pursued by Konstanz researchers in this area will pave the way for further studies using this technology. “One of the reasons VR is still relatively rare in the animal sciences is that it’s expensive and technologically daunting,” says Couzin. “But the funding we have to conduct this research in Konstanz will take this technology forward to make it more available and useful for the community.”